GPT-4 API Best Practices: How to Build Reliable, Scalable, and High-Quality AI Applications

GPT-4 has rapidly become a core component in AI-powered products—enabling natural language understanding, creative generation, research assistance, support, and automated reasoning. But achieving consistently high-quality results isn’t as elementary as sending a prompt and reading the response. Getting the most out of the GPT-4 API requires thoughtful kind of prompts, context management, safety integration, and gratifaction tuning.

This article outlines the most notable improving GPT-4 answer accuracy for implementing the GPT-4 API effectively.

🧠 1. Start With a Strong System Prompt

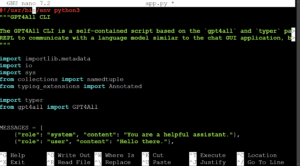

Every GPT-4 API request includes three roles: system, user, and optionally assistant. The system prompt is a vital and defines the model’s behavior.

Weak system prompt:

“You are an AI assistant.”

Strong system prompt:

“You certainly are a highly reliable assistant that responds concisely with factual, verifiable information. When uncertain, say ‘I’m not sure’ instead of guessing.”

A well-defined system prompt dramatically reduces hallucinations and inconsistency.

🎯 2. Be Explicit About Output Requirements

GPT-4 guesses what you look for unless you specify clearly. Never assume format — enforce it.

Example formatting instructions:

“Answer with bullet points”

“Output only valid JSON — no commentary”

“Use Markdown with H2 headings”

“Provide a step-by-step numbered plan”

Providing templates works best of all:

"topic": "",

"summary": "",

"key_points": [],

"sources": []

Well-structured outputs reduce parsing errors and improve application reliability.

💾 3. Manage Context Intelligently

Unlike humans, models don’t “understand the full conversation”; they look only at the tokens provided.

To prevent context loss and rising token costs:

Summarize old messages instead of passing the entire chat history

Keep essential data in structured memory rather than free text

Restate critical instructions periodically

For large documents, provide exactly the excerpts relevant for answering the query rather than the whole file.

⚙️ 4. Control Creativity With Parameters

Temperature and related settings drastically influence behavior:

Parameter Effect

temperature Higher = more creativity, lower = more precision

top_p Nucleus sampling; replacement for temperature

presence_penalty Increases topic diversity

frequency_penalty Reduces repetition

Good defaults:

For factual / analytical tasks → temperature: 0 – 0.3

For creative tasks → temperature: 0.7 – 1.1

🔁 5. Use Iterative Prompting Instead of One Giant Request

Instead of asking GPT-4 to perform everything in one particular prompt, break tasks into steps.

Example pipeline:

Extract structured information

Analyze it

Generate summary or output

This reduces hallucinations and improves accuracy dramatically, specifically for complex workflows.

🧪 6. Validate Outputs — Don’t Trust Blindly

Even well-prompted models can produce incorrect information. Use automated checks when possible:

JSON schema validation

Rule-based verification (dates, numbers, formatting)

URL and citation validation

Consistency cross-checks (ask GPT-4 to ensure its own output)

Safety requires validation.

🚀 7. Cache and Reuse GPT-4 Results

If the job repeatedly calls GPT-4 for similar requests, caching improves performance and reduces costs.

Examples:

Precompute support responses

Store embeddings for repeated semantic search

Memoize final reasoning ends in conversational bots

Cache keys will include the prompt, model, and temperature to assure consistency.

🛡️ 8. Implement Guardrails

To avoid unsafe or undesired content:

Set clear refusal and redirection rules within the system prompt

Use moderation endpoints for user input and model output

Give prohibited content lists (e.g., no medical diagnoses)

Add logic for blocking or rewriting dangerous requests

Trust the model — but verify before presenting responses to users.

📊 9. Log Everything for Monitoring and Improvement

For production systems:

Save prompts, responses, and metadata (temperature, model version)

Track failure cases (invalid JSON, hallucinations, safety flags)

Build regression tests before updating models

Logging turns AI quality from guesswork into measurable control.

🧩 10. Treat GPT-4 as being a Reasoning Engine, Not a Database

GPT-4 excels at:

reasoning

language understanding

explanation

summarization

analysis

planning

GPT-4 does not replace:

SQL databases

search engines

real-time sensors

authoritative scientific sources

Combine GPT-4 with traditional software systems for top results.

Building a reliable AI application while using GPT-4 API requires a lot more than calling an endpoint — it will take well-designed instructions, context control, health concerns, validation, and gratification optimization.