The Frequently Asked Questions Rentry

Perhaps you may not have noticed, but a lot of us are quite bored of answering the same questions over and over again. Things have changed since March of this year, and so a new FAQ needs to exist so new or returning Anons can come here instead of asking the same shit over and over again.

Once again, out of respect for what was before, here's a link to the original FAQ, I'll be reworking still valid sections into here in the interests of convenience and not reinventing the wheel.

- Context

- GPT-6B-J

- GPT-NeoX-20B

- Storyteller Agnostic

- NovelAI

- What is NovelAI?

- How much does NovelAI cost?

- What models does NovelAI have available?

- What do we know about their fine-tuning dataset?

- What advantages does NovelAI have over AI Dungeon?

- The default settings aren't doing it for me, but I don't know what to do regarding generation options. Help?

- Can Sigurd understand

[ESTABLISHED FRANCHISE]? - Is there any place I can go to see if others have made lorebooks of my favorite things?

- What are 'Story Parameters'?

- What are 'AI Modules'?

- What is 'Phrase Biasing'?

- Who the fuck is Turk?

- Who the fuck is finetuneanon?

- Who the fuck is Aini?

- HoloAI

- KoboldAI

- /aids/

Context

So what's the current situation?

Everyone who is sane has progressed from AI Dungeon to three major avenues: NovelAI, HoloAI, and KoboldAI with Colab. NovelAI has the most features, HoloAI is the least expensive option, and KoboldAI with Colab is for those who are broke but technologically competent.

A new model has come out of fucking nowhere, Fairseq 13B. It's quirky but intelligent, and NovelAI have incorporated it into their line of models as the fine-tuned Euterpe.

OpenAI Dragon is no longer offered by Latitude.

What the fuck happened to AI Dungeon?

On April 27th 2021, Mormon and Co. decided to implement an automatic regex filter to prevent AI generation and flag user stories if they involved sexualization of a minor. Like all forms of moral panic over user generated creative content, the initiative went about as well as you might expect.

It gets worse. On August 17th 2021, Latitude released a blog post detailing a hackjob GPT-6B-J solution, and on September 30th 2021, Latitude pulled back on private story reading and suspension systems altogether for a 'see no evil, hear no evil, speak no evil' approach. So long as you don't put your raunchy stuff up on explore, you won't be banned. You'll still be stopped by their filter, though.

Finally, on December 31st 2021, Latitude made the financial decision to shelve OpenAI Dragon permanently, leaving only the AI21 edition of Dragon left for users. It... sucks.

But hey, they've got a new merchandising store! No I'm not going to link it, it's awful.

Well, I don't like sexualizing minors, so I'm fine!

Ignoring the fact that we're talking about literal fucking text, no, you aren't.

Let's say you have a tale with no terms of endearment, no sexual content, no profanity, and, shit, you're a thesaurus whiz.

Even with all of that, you'll have to work to keep the fucking AI from going down that path in the first place. Mormon's meticulously curated CYOA fine-tune data also includes shady kiddie fucking and other amusing scenarios. Triggers are something you'll always have to watch out for when doing short cooms, and they're unavoidable for anything longer.

Also even if the focus on your cooms isn't anything "unsavory" the very fact that you're doing it will trigger OpenAI's filter which Mormon has now put in place for Dragon. So yeah, you are not fine.

But wait, these alternatives lack Davinci's 175B parameters!

Yes, that is true. As of the time of writing, all three use EleutherAI's 6B model. NovelAI also offers Fairseq 13B, but that's limited to Opus tier and is still in its early stages. But there are three major strengths they all share that help with the pain: 2048 tokens, freedom, and the lack of bad fine-tuning.

When Mormon inserted his insipid kid diddling second person dataset for fine-tuning, he effectively crippled the ability of both Griffin (which is 6.7B parameters, by the way) and Dragon. Even at the initial release of GPT-6B-J when the only way to use it was through a website hosted by EleutherAI, we quickly realized how much bad fine-tuning can ruin a perfectly good model.

Previously in this section I said:

GPT-6B-J can't compete with Dragon's highest highs, and it can't hope to achieve some of the major cooms we were able to get out of summer Dragon, but it's pretty fucking good as is, and if you're willing to be creative and malleable, you'll have a much better time with it than AI Dungeon today.

13B is here to add a big fat asterisk to that. Even with the work-in-progress fine-tuned model NAI is offering, many can attest to the fact that we're beyond the threshold of understanding intent. Despite a lack of modules, and in its first complete version, 13B is punching in Dragon's weight class. When it works, anyway.

Plus there's 20B now, which just about all the premium services offer. It's smarter than 13B for sure, but is still definitely in its early stages.

You do not need the 175B parameters to have the good times you remember. If you're ready to wait, or spend $25, you can see this for yourself right now.

Do I return to AI Dungeon now that Latitude won't ban me for my stories?

If you're genuinely asking this, I seriously doubt your intelligence.

What if unpublished content goes against third-party policies?

If third party providers that we leverage have different content policies than Latitude's established technological barriers then if a specific request doesn't meet those policies that specific request will be routed to a Latitude model instead.

Latitude on how dumb you are for thinking you can have fun on AI Dungeon today.

While Latitude will not ban you anymore for private stories, their filter will prevent you from continuing your generations. Even if you are able to bypass that filter (which they are still improving and plan to expand its reach to other topics), you won't be able to pass OpenAI's filter on a consistent basis. That is still in effect. Any output that does pass that will be sent to OpenAI who monitor for unsafe content, presumably to continue improving their own filter so you won't be able to do it the next time.

As a result, you'll still be directed to their AI21 Dragon model. A model that has been fine-tuned on shit, with significantly less usability and features than Holo, Novel, or Kobold.

Returning to AI Dungeon is unwise. If you're asking this seriously, you're either misinformed or retarded mentally challenged.

With the higher traffic we had a volume-based discount that we will no longer have since most users transitioned away. Because of that we would now pay the normal token price which is 12 cents / 1000 tokens (which is around 1 action) for a fine-tuned model. That would mean every 100 actions would cost $12.

Given this change and that most users can’t have a good experience with the new filters, Dragon-OA isn’t going to be offered starting in the new year.

GPT-6B-J

Is it as good as Dragon?

If we're talking the original OpenAI Dragon, 6B gets the win just for continuing to exist.

Serious answer: 6B's knowledge and understanding of writer intent is lacking which makes complicated scenarios almost impossible without serious intervention. Both premium storyteller services both offer a plethora of methods to circumvent this without writing, but some would say that only accentuates the point about requiring a lot of work.

If we're talking about AI21's 178B Dragon, it's better. Seriously.

Is it as good as Davinci?

Obviously not. But OpenAI won't allow you to use Davinci for anything "ghastly", so why even ask?

GPT-NeoX-20B

Is it happening?

It's happened.

When will it be done?

It's here.

Is it good?

Hard to say. Actually using the model vs. comparing it's eval performance against the amazing Fairseq 13B has yielded a wide array of conflicting opinions about its strength. This problem only intensified with NovelAI offering finetuned models based on each (Euterpe, and Krake respectively). It's best to try it out for yourself and discern.

Storyteller Agnostic

How do I write prompts?

This is a difficult question to answer, so I'll try to do so based on my own experience.

If you've never done this before, a prompt doesn't have to be much more than the establishment of an easily understandable premise, possibly with accompanying memory, A/N, or world information to help reinforce the concepts that premise utilizes. Seriously.

You can write in whatever perspective and tense you want, but consider first person if you're only writing in second person. Alternatively, don't, I don't give a fuck.

You don't need to write more than a paragraph if you have a good basic idea. If your idea is worth more than one paragraph, then by all means continue writing. Write as much as you want, so long as the end of your prompt is open enough to allow for agency. The only true limit is the context size (2048 tokens / 8192 characters), which can be circumvented by the aforementioned memory, A/N, and WI to bring relevant concepts back into context.

Prompts can be long or they can be short. They can have extensive World Info entries for an open world feel, or they can be minimalist short stories exploring specific moments. Just about any story can serve as a great prompt—so long as you account for choice.

Unless you specifically want to be stringent. Just don't expect others to want that, too.

However, there is a catch that can only be solved through testing of the prompt. You must ensure that the AI understands the general direction for at least a few generations. Freeform writing means any kind of concept you want to bring across to the AI can be, but you must be prepared to discard specifics if the AI becomes overwhelmed, barely remembering all of them. It isn't a mind reader, nor is it omniscient. No matter how good these AI models become, we'll always be smarter than them.

And if after all that you find that the AI can't do it to a satisfactory standard, look to modules and phrase biases to drive it home.

The final, most important thing to remember is the effect your own writing style has on outputs. If you discover that the AI isn't producing the exquisite prose you had in your head, consider everything you had previously written. Spelling errors, grammatical errors, and even less concrete things like a fast-paced narrative or scattered focus can all be translated into AI outputs you probably don't want. Intend every word you write. It's best to set a good example. Remember that, and you'll go a long way.

TL;DR: just start writing, lol.

I'd like more surprise in my stories

Consider using "Suddenly," in your inputs, or specifically noting the fast-paced unexpected nature of the story in Author's Note.

What's the difference between Author's Note/Memory/World Info?

It's all about positioning.

Memory is located at the farthest back of context and remains there as long as something is entered into it. The only times memory isn't in context are when its empty or when there's a token space problem so severe it must be shortened. Its purpose, then, is to provide knowledge for the AI use. Direct continuations aren't a problem here as it's so far back, but this might also lead to the AI ignoring what is here—especially if what is further down/more relevant contradicts it. Memory is best used it for facts pertinent to the situation your story is in.

Author's Note can be found further down, near the bottom of context. It, therefore, has a strong effect on the AI's generations due to its proximity to the bottom, frequently resulting in generations directly continuing what you put there. A/N, like Memory, always exists as long as you've written something for it, but it has a higher priority than both Memory and World Info, and would be one of the last things to be truncated if you ran out of token space. As a result, Author's Note should be used for immediate and relevant information, whether it is a summary of what is currently happening, a harbinger of what's about to happen, or even a meta comment on things.

World Info, placed at the back along with Memory, works differently to these previous two. It operates on keywords, which it searches through context to find. If they exist, the corresponding entry is inserted into context. If at any point that keyword falls out of the specified search range, the entry is removed. By being conditional, World Info presents an opportunity to write information that isn't always relevant to your story. The total history and specific qualities about your character's sword isn't going to be very useful to have in context when you're baking brownies, and World Info allows you to set up an automatic system to take it out and put it in when relevant.

What's important to take away from this is that, despite these differences, all three of these sections accomplish the same thing: inserting text into context. The only difference is where they're placed, and in the case of World Info, under what conditions.

To the AI, all of this arrives to it as a homogenous block of text, and it will treat it as such. It does not have an intricate knowledge of Memory, Author's Note, or World Info, and won't be able to discern them in context.

>If that's the case, why did you say the AI values Author's Note a lot and Memory/WI less if it doesn't have any prior knowledge to differentiate them?

Because A/N's text "just happened" in the story, whereas Memory/WI's text did not. It's all about positioning.

How should I style my Author's Note/Memory/World Info?

For a majority of the time, you should do nothing else other than making it in the tense, perspective, and writing style of your main prompt. More so than ever before, memory formatting has no reason to exist; with 1024 - 2048 tokens max that these new storytellers work with, you shouldn't be in many situations where you run out of space for all your prose entries.

But what about brackets! They—

Are primarily useful for single sentences; otherwise, the main point of "stopping the AI from regurgitating your output" becomes less obvious.

If you put an entire paragraph in brackets, the AI will be less likely to regurgitate the entire paragraph, not, all specific sentences from that paragraph. Brackets are good for influencing the AI with single sentence hints when you can't be bothered to do that through the story itself, but anything more than that rarely has a greater impact than simply writing good fucking prose.

And that doesn't even take into account the fact that the AI still recognizes it as part of the context and may pick up your shit prose anyway.

If brackets are still useful to you—that is, you constantly encounter the AI repeating your stuff verbatim or just get better stuff with it—then you're probably going to use them anyway. If they don't, or you can't tell, don't rely on brackets for any more than short sentence hinting.

You're a liar! I went ahead and wrote all of my character descriptions in prose, and the AI is confusing them!

That's probably because you're using a lot of pronouns. You should stop doing that.

When you start a lot of sentences with "He" or "She" the AI isn't smart enough to figure out who you're talking about during complex situations, so it's best to keep it under control in your stories. It's fine if the story only has a few characters, but if there are a lot (especially of the same gender), you'll get moments when the AI confuses the "She" you're referring to with someone else. These pronouns are too generic when describing a character, so avoid using them as much as possible.

This can be accomplished by, for example, starting sentences describing a character with names, nicknames, or titles that define them rather than pronouns. Make sure that whatever attributes or traits you use for them are unique to them only, if there are some that are common then describe them in radically different ways to minimize overlap. Keep track of the adjectives and phrases you've used for each character; the AI won't know which "blond girl" you're talking about if they're all referred to as such, and your efforts will be for naught.

In other words, do something like this, though it's best to cycle through all the titles they have to make sure the AI doesn't pick up on ONLY referring to them by their name.

This is one of the few times when deviating from standard writing conventions can actually help you; however, don't use this as an excuse to go crazy with formatting or bracket usage. This should go without saying, but you don't have to use this writing style in the story itself — that would be silly.

What about the times when I'm creating characters who come from the same race or faction?

In those instances it's important to consolidate shared information so that it exists in the same place; like its own world info entry.

If, for example, three characters come from a particular faction, then create an entry for that faction, detailing the traits and characteristics shared by those members. Think uniforms, mantras, tenets, all that good stuff, anything that they all share you write down in this faction entry.

Then when creating the individual characters, all you would need to do is specify that they are a member of that faction, and spend the rest of their world info entry describing what is unique to them.

The same can be said for a race, if you have several characters that share the same race, describe the similar characteristics in a separate race entry, and describe the unique characteristics in each of the character's entries. Then connect it all up by specifying their race. You really don't need to do any more than that in most cases.

Your explicit connections can be a pair of sentences:

John is a decorated general of the Trapezium Dynasty.for theJohnentryThe Trapezium Dynasty employs many soldiers, the most notable being: John.for theTrapezium Dynastyentry.

Anything pertaining to John the individual gets put in John, anything pertaining to the faction John is in gets put in Trapezium Dynasty.

Finally, in terms of handling trigger terms between them, set up the faction/race entry to show up not only when its name is used but also for each of the relevant characters. Do not do this the other way around, as that would lead to all the characters showing up because you typed the faction/race name.

It's important to minimize repeating yourself verbatim in scattered places. Make your Memory, World Info, and A/N centralize the location of semantically similar details.

NovelAI

What is NovelAI?

NovelAI is a subscription service that hosts GPT models fine-tuned to produce high-quality prose, which the user can then use to dynamically generate completions for storytelling purposes.

How much does NovelAI cost?

NovelAI has a free trial and offers three subscription tiers.

The free trial works like this:

- Before registration, you are allowed 50 actions to generate AI responses with.

- Once they run out, you can register an account with the service and verify your email to net a further 50 actions.

- You can't generate AI responses once you've run out of these actions. The next step would be to select a tier to subscribe to.

The three subscription tiers are as follows:

- $25 Opus Tier - Access to Euterpe (a fine-tuned 13B parameter model), Unlimited Max Priority Actions, 2048 Token Context Window, 600 Characters Max Output Length, Experimental Features, 8000 Steps per month to train custom modules.

- $15 Scroll Tier - 1000 Max Priority Actions each week, 2048 Token Context Window, 100 Max Output Token Length, 500 steps per month to train custom modules.

- $10 Tablet Tier - 1000 Max Priority Actions each week, 1024 Token Context Window, 100 Max Output Token Length, 500 steps per month to train custom modules.

Scroll tier is the best to go for if you're just starting the service. The extra 1024 tokens make a huge difference in play compared to just half.

NovelAI also comes with the ability to purchase perpetual module training steps:

- $3.79 for 2,000 steps

- $6.49 for 5,000 steps

- $10 for 10,000 steps

As said before, these purchased steps persist until you use them.

What models does NovelAI have available?

Currently NovelAI has multiple: Calliope, Sigurd, and Euterpe. All are fine-tuned on their own custom dataset.

Calliope is based on GPT-Neo (2.7B parameters), Sigurd is based on GPT-6B-J, and Euterpe is based on Fairseq 13B.

Sigurd is the name of NovelAI fine-tuned GPT-J-6B model. This model launched during the Beta as an Experimental Feature for our opus tier subscribers and has since been rolled out to all tiers. Newer Sigurd models will continue to release as Experimental Feature for Opus subscribers first.

Sigurd is a much bigger model and response times are a little slower in comparison to Calliope, our 2.7B model.

NovelAI's FAQ

What do we know about their fine-tuning dataset?

The specifics have yet to be revealed by any member of the team, but we do have a general understanding. Kurumuz and his colleagues stated in the early days of the project that they planned to work primarily with books, light novels, and high-quality novellas for their dataset.

The first version of the set was used to fine-tune Calliope, then Sigurd (5% at V1, then completed at V2), but there was an oversight regarding dialogue quality, which necessitated a dataset pruning to remove offending stories. After that, they fine-tuned again, resulting in Sigurd V3. Finally they expanded and improved the dataset to culminate in the 4th iteration of Sigurd. It's believed that the dataset is now >10 GB in size, though new text is constantly added to the dataset by the fine-tuning team.

What advantages does NovelAI have over AI Dungeon?

Simply put, NovelAI outperforms AI Dungeon in terms of UI and UX. Its Sigurd model outperforms Griffin in its current form, and while it isn't as good as Dragon was in its prime, the lack of CYOAIDS more than compensates for the difference in parameter count. NovelAI is currently the best option if you are able and willing to pay for it.

The default settings aren't doing it for me, but I don't know what to do regarding generation options. Help?

You can take a look at our collection of settings or the section in the unofficial knowledge base regarding the matter.

Can Sigurd understand [ESTABLISHED FRANCHISE]?

Not well. Partially because of GPT-6B-J's training and partially because of fine-tuning, Sigurd can recall concepts and information from most popular media, but lacks the 'understanding' necessary to correctly contextualize these things. It may know Pokemon abilities but assign them to the wrong Pokemon, for example. Most of the time, creating a lorebook is your best bet.

Is there any place I can go to see if others have made lorebooks of my favorite things?

Right now there are two main places. The NovelAI discord or the lorebook repository.

What are 'Story Parameters'?

Based on the way NovelAI's fine-tuned dataset was tagged, story parameters refers to the concept of recreating said tag in your own story to steer NovelAI in a certain direction. Format shit that works.

Okay, so where can I find tried and tested values?

What are 'AI Modules'?

AI Modules are a 20 token sacrifice that can be used to influence the AI's writing style to match that of a module. This is accomplished by superimposing a twenty token embedding on top of the context, which was previously trained on a dataset for a specific (relatively small) number of steps.

Modules are extremely effective at changing the AI's word choice and general style to what you want, and most people use them for three things: concepts, settings, and styles. While this method is ineffective for teaching Sigurd entirely new things, it is effective for shifting its priorities to focus on other shit that it already knows.

NovelAI allows you to train your own custom modules to use in your stories.

That sounds amazing! Where can I go for modules?

You can join the NovelAI discord or the unofficial NSFW dens, or if you're not a woman, come to our super cool man cave module repository.

What is 'Phrase Biasing'?

You can use Phrase Biasing to increase or decrease the likelihood of certain words, sentences, or even raw token values occurring in a generation. They're great, but they're extremely granular, so consult the wiki or something.

It differs from standard logit biasing in that it can work with entire sequences of tokens rather than individual ones, and it is context adaptable—the effect is consistent whether the context is filled or empty, allowing for things like basic arithmetic to be performed with bias values.

Also you can use them with world info entries. It's great.

Have others made bias sets?

Who the fuck is Turk?

kurumuz, or Turk when his backend is failing, is the lead developer and main guy behind NovelAI. He was an Anon like you and me, and stepped up almost immediately after Latitude confirmed their regex filter chicanery to create an alternative. Considering how good of it is, and that he still posts to the general, he's cool in my book.

Who the fuck is finetuneanon?

finetuneanon is another veteran of the general who is now on the AI development team for NovelAI. He is best known for his fine-tuning work on GPT-2.7B, creating the horni fine-tuned models, one of which being a literotica dataset and the other being a light novel dataset. Granted they weren't all that great, but he has since acknowledged that it could have been done better. Be sure to send him your love when he posts.

Who the fuck is Aini?

Aini is the community manager for NovelAI. She was a regular in the /aidg/ threads who would occasionally drawfag, but has since moved on to the NovelAI discord.

HoloAI

What is HoloAI?

HoloAI is another online alternative, offering a fine-tuned GPT-J-6B for a more economical price point.

How much does HoloAI cost?

HoloAI does have a free tier, with limitations on settings and a max generated output character limit.

It offers a Pro-Tier with higher limits for $5 a month, and an unlimited Elite Tier for $8 a month.

HoloAI plan to offer a $12 tier with access to their eventually fine-tuned GPT-NeoX-20B, and 2000 training steps for AI modules.

What do we know about their fine-tune dataset?

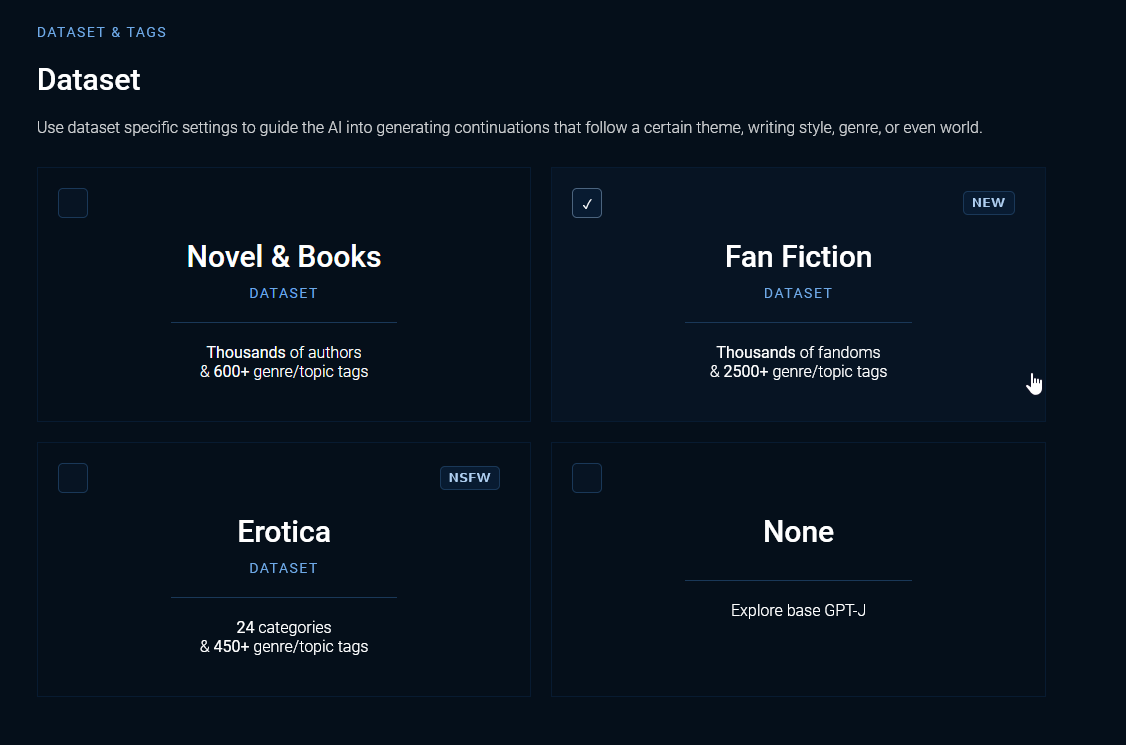

The concept is the same as NovelAI: cultivate a dataset of high-quality literature with the goal of improving the model's prose. But thanks to their Dataset and Tags feature, I can actually make an educated guess about where they've gone to collect them.

As of the time of this writing, HoloAI's dataset seems to include the following sources: Goodreads, Literotica, FanFiction and Archive of Our Own.

You can switch between these and fill in the relevant information to customize how your story is told. What you can choose from varies slightly depending on the dataset you select. For example, with Novel & Books, you can choose renowned authors for the AI to emulate their style, which it will do to the best of its ability.

Is the dataset better than NovelAI's?

Nobody can say which is better, but they're both good in their current forms and accomplish their goals. What I will say is that HoloAI's dataset tagging is easy to recreate via a GUI front end, which gives it an advantage in terms of usability.

What advantages does HoloAI have over AI Dungeon?

It provides a fine-tuned GPT-6B experience with frequent significant updates and measures taken to allow you to secure your stories, while also providing unlimited generations for a lower price than AI Dungeon. So, essentially, a simpler version of NovelAI at a more competitive cost.

What advantages does HoloAI have over NovelAI?

So far, there haven't been many, the price it offers is its biggest strength. HoloAI's early days were rocky because they prioritized release before NovelAI could even announce a date for the public beta, and they initially offered the Megatron transformer model, which sucked. They are working on various features, a revamp of their own fine-tuning dataset, and have implemented markdown support for not only the user side, but also the model side. They also include data settings to direct their AI; similar in concept to story parameters, but officially implemented through the front end and verifiable as a result.

It does not, however, have any distinguishing features over NovelAI at this time. They have AI modules and a version of phrase biasing, but there modify the formation of context (positions and insertion orders of World Info entries, memory, or author's note) and what settings it does provide are rudimentary still. Some may say that this leads to a less daunting experience, but for the experienced, it can lead to feeling stuck when a model isn't getting what you want it to do. Perhaps the arrival of 20B will change this.

If you're on a budget, HoloAI is a good alternative to NovelAI and a good place to start for premium storyteller services, but NovelAI has it beat in terms of control, malleability, and adaptability.

KoboldAI

What is KoboldAI?

KoboldAI is a browser-based front end for transformer AI models stylized in a similar vein to AI Dungeon. Originally created and used for GPT-Neo models and smaller, it's found new life with colab notebooks, where you rely on Google's spare computing power to run a larger models that you then directly interface with in your locally run KoboldAI client.

How much does it cost?

It doesn't cost anything, but it does require some tinkering and a fair bit of time. It's a lot easier to set up now, and they've tried to circumvent the "insufficient memory" notebook runtime problem. Google may close your session due to 'inactivity,' and they will track your usage over time in order to change your usage limit. You can avoid these by purchasing Google's $10 Colab Pro subscription, but at that point you might as well use one of the other paid alternatives.

It may go without saying, but you're going to need to use a Google account.

Notebooks run by connecting to virtual machines that have maximum lifetimes that can be as much as 12 hours. Notebooks will also disconnect from VMs when left idle for too long. Maximum VM lifetime and idle timeout behavior may vary over time, or based on your usage. This is necessary for Colab to be able to offer computational resources for free. Users interested in longer VM lifetimes and more lenient idle timeout behaviors that don't vary as much over time may be interested in Colab Pro.

How does KoboldAI fair against NovelAI or HoloAI?

It's alright.

NovelAI slaps it silly with its premium features, improved user experience, custom modules, and unrivaled customization.

HoloAI's dataset options and user-friendly setup make it the best choice for those willing to pay but not excessively.

But if you don't want to pay, or hate subscriptions, KoboldAI is still a good choice.

Unlike these two, KoboldAI does offer fine-tuned 6B models designed with the second person in mind like Skein, implying that it outperforms on CYOA stories.

Security-wise, it's leveraging of Google's colab isn't all that great. Errors, rare as they currently are, can be quite perplexing; while Github has a nice FAQ for common ones, the fact that they can occur due to nothing more than ostensible "chance" is annoying, especially given the long startup times. Restarting runtimes to obtain an appropriate GPU/TPU is also inconvenient, though to be fair this problem has been mitigated with colab notebook fuckery on their part. Nice one, Henk.

So yeah, it's alright.

And if I run it native?

KoboldAI supports Breakmodel, which allows you to run models across your GPUs (or even your CPU), but generation speed is significantly slower and the number of tokens available is limited. Otherwise, you'll need at least 16 GB of VRAM to run a 6B model locally. Or if you're looking for 13B, that requires around 38GBs of VRAM. The unrivaled privacy is nice, though.

If you're referring to GPT-Neo, using that for almost any narrative is akin to attempting to talk with your elderly grandmother suffering from dementia. Sure, there are moments of clarity here and there, but they're so rare and sporadic amongst the perpetual incoherency that they unnerve rather than please, almost as if the fact that it happened at all is something that shouldn't be possible.

When you combine that with the model's less-than-stellar fine-tunes, you have a recipe for a massive waste of computing power. Use their colabs, consumer GPUs aren't quite there yet.

Local model use is simply too intensive, too slow, and too limited in features to beat premium storytellers. Only consider if you're financially fortunate, or paranoid.

How does KoboldAI fair against AI Dungeon?

It's better than AI Dungeon.

How do I get it all working?

A guide to set up KoboldAI exists, and a guide to modify it to work on external devices on the same local network exists, but you don't need either anymore. They're both so outdated to the point that continuing to link them would fuck you over.

Instead, current KoboldAI setup is as simple as downloading their offline installer and running through the executable steps. If you'd like to have it work for external devices, run the remote-play.bat once installation is done.

Can't I just try and run it all on my own machine?

Only if you're ready for slow speeds or have 16 gigs of VRAM on your machine. Otherwise, you're stuck with colab.

/aids/

'/aids/'?

Originally we were going to die as /aidg/, but instead we eventually settled on a rebranding to AI Dynamic Storytelling. Not dead yet, it seems.

But if NovelAI is the popular choice, why not rebrand to that?

The self-immolation of Latitude taught us that naming ourselves after a specific service was a bad idea if that service was going to turn around and shit on you later. It is preferable to cover the entire field rather than just one storyteller, regardless of who is the most popular option.

>A lot of words for "it was funny so we kept it"

What are "Theme Fridays"?

Theme Fridays are a biweekly event where Anons post prompts that contain a recurring idea picked on every other week.

Originally the theme was decided on a vote, but we decided to change to a random pick using the current thread's post number as a seed for reproducibility after one of our votes was rigged. That ended up being a crapshoot since chance-based selection wasn't fun and themes had to be less specific to appeal to a large number of people, leading to transformation friday.

We're back to voting for the foreseeable future.

What is the state of the general?

Dragon is dead, leaving the above-mentioned alternatives as platforms people are using. Things are slow, but constant. Both HoloAI and NovelAI are improving their services, but NAI has the upper hand with Euterpe.

20B is training, but no one knows when it'll end—or if it'll be any good. Here's hoping!

What happened to the original coomer's guide?

It's still available due to being hosted on GitHub, but, yeah, the main guy behind it is gone. That's why this is here.

What happened to clubanon?

What do you mean? He's still around